UX metrics are central to product decisions and user experiences, guiding teams through the intricate process of interface optimization with the power of data. However, hidden biases can compromise their reliability, leading teams to misinterpret user behavior and build features that miss their intended mark.

These measurement errors stem from various cognitive and methodological biases that affect data collection and interpretation. From flawed testing methods to incomplete user samples, these biases create blind spots that prevent teams from seeing the full picture of user interaction patterns. Understanding and addressing these biases helps teams create more accurate measurement frameworks that capture genuine user needs.

Understanding Bias in UX Metrics

Cognitive biases affect user research at every stage, from planning to analysis. These mental shortcuts and presumptions can lead teams to overlook critical insights and reinforce existing assumptions about user behavior. Product teams often miss these biases because they operate below the surface of conscious decision-making.

Many UX professionals believe their analytical training protects them from bias, yet this confidence itself can become a blind spot in their research process. Recognizing how biases influence decision-making marks the first step toward building more reliable research methods.

The Impact of Confirmation Bias

When UX teams start with strong preconceptions, they often seek data that supports their existing beliefs. This selective attention creates blind spots in research, causing teams to dismiss contradictory findings that might reveal genuine usability issues. A product team might focus on positive feedback about a new feature while minimizing reports of confusion or frustration.

Teams might also structure test scenarios that inadvertently guide users toward expected behaviors. For instance, task instructions might hint at preferred navigation paths, preventing the discovery of natural user patterns.

Information Processing Distortions

Without clear objectives, information bias clouds judgment when teams collect excessive data. This overabundance of metrics can obscure meaningful patterns and lead to analysis paralysis. Teams caught in this cycle often track every possible interaction metric but lose sight of which measurements actually predict user satisfaction and product success.

Raw numbers tell incomplete stories without proper context. A high task completion rate might mask underlying usability problems if users struggled but persevered through poor design. Similarly, time-on-task metrics might mislead if teams don’t account for users’ varying expertise levels.

Identifying Bias in Usability Testing

Recognizing bias requires a systematic examination of research methods, participant selection, and data interpretation approaches. Small oversights in any of these areas can accumulate into significant measurement errors. Teams need clear protocols to spot potential biases before they affect research outcomes. A structured approach to bias detection helps teams catch issues early in their research process rather than discovering problems after making key product decisions.

Regular audits of research practices reveal subtle biases that might otherwise go unnoticed. Teams that build bias checks into their workflow find more reliable patterns in their user data and make more informed design choices.

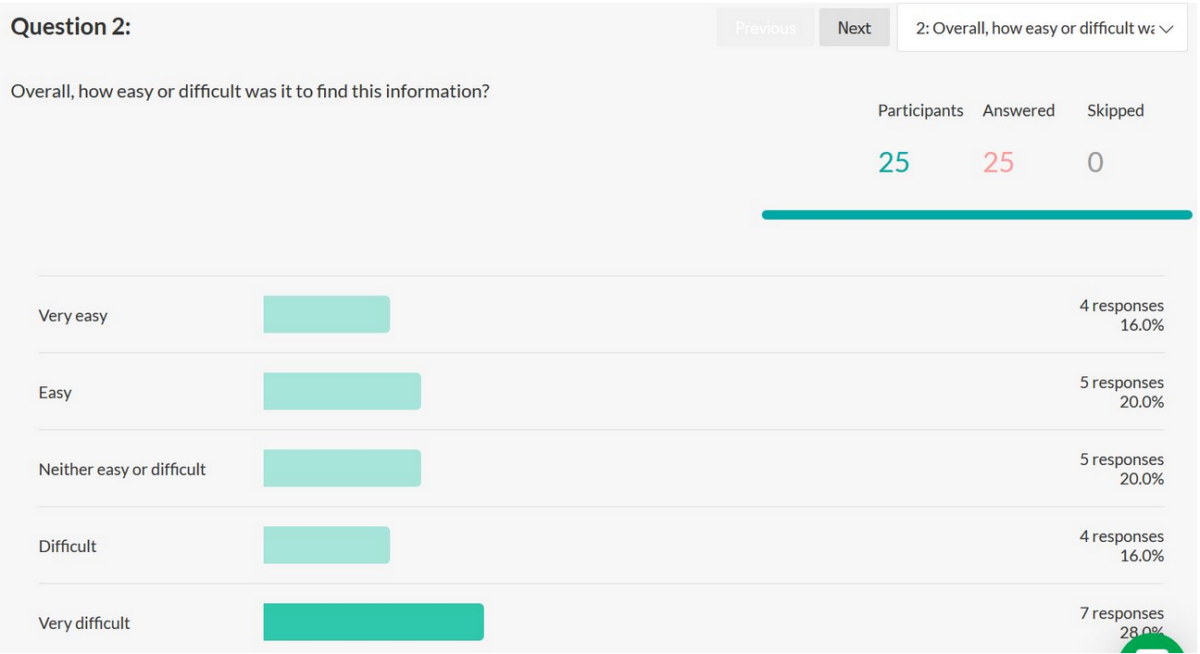

Sample Selection Skew

User research loses value when participant groups don’t match target demographics. Teams sometimes recruit convenient samples, like internal employees or tech-savvy early adopters, that poorly represent actual users. This mismatch creates a false picture of product usability, as test participants might navigate interfaces differently than the intended audience.

Geographic, cultural, and technological factors influence how people interact with products. A banking app tested only with urban smartphone users might fail to address the needs of rural customers with limited internet access. Testing with narrow user segments builds products that serve some users well while creating barriers for others, leading to skewed success metrics that mask accessibility issues.

Question Design Flaws

Poor question construction introduces measurement errors into usability studies. Leading questions push participants toward particular responses, while unclear instructions create confusion that affects task performance metrics. These flaws often stem from researchers’ unconscious assumptions about how users think about and interact with product features.

Survey questions need careful crafting to avoid priming bias. Asking “What frustrated you about this feature?” assumes negative experiences and might prevent users from sharing positive feedback. Task instructions that include subtle hints about expected behavior, like “Use the search function to find the product,” can mask navigation problems and create artificially high success rates.

Mitigating Bias in Your UX Process

Creating safeguards against bias requires careful planning and methodological rigor – pilot testing is especially critical to reveal hidden flaws in research design before they affect results. UX teams that build bias checks into each research phase catch potential issues faster before they’re able to compound into catastrophe.

Each step in the research process presents opportunities to reduce bias through systematic validation. Cross-functional review panels help spot assumptions that individual team members might miss, while standardized quality checks create consistent frameworks for evaluating research methods. Teams that document their bias mitigation steps create reproducible processes that strengthen the credibility of their findings.

Structured Data Collection

Documentation standards help teams capture consistent, comparable data across test sessions. Clear protocols reduce the influence of individual researcher biases and make results more reliable. Recording methods, participant interactions, and environmental factors create a complete picture of testing conditions that help teams identify potential sources of bias.

Using multiple data collection methods provides cross-validation opportunities. Combining quantitative metrics with qualitative observations helps teams spot discrepancies that might indicate measurement problems. When metrics and user feedback tell different stories, teams can dig deeper to uncover hidden biases in their collection methods or testing assumptions.

Objective Analysis Frameworks

Standardized analysis procedures help teams evaluate data systematically rather than cherry-picking favorable results. Creating clear success criteria before testing prevents post-hoc rationalization of unexpected findings. These frameworks act as guardrails, keeping analysis focused on predetermined objectives rather than drifting toward convenient interpretations.

Regular peer reviews catch individual biases before they affect design decisions. Fresh perspectives help identify assumptions and interpretations that might not align with actual user behavior. Research groups that welcome diverse opinions and open dialogue produce more balanced interpretations of user data, reducing the risk of collective blind spots in their analysis.

Final Thoughts

Accurate UX metrics come from acknowledging and actively countering bias at each step of the research process. By combining diverse user samples, well-crafted questions, and structured analysis methods, teams can spot potential distortions before they affect product decisions.

The most reliable research emerges when teams pair rigorous methodology with regular self-examination of their assumptions and processes. At the end of the day, the research teams that weave bias checks into their daily practices will build better products that meet genuine user needs and expectations.

![]() Give feedback about this article

Give feedback about this article

Were sorry to hear about that, give us a chance to improve.